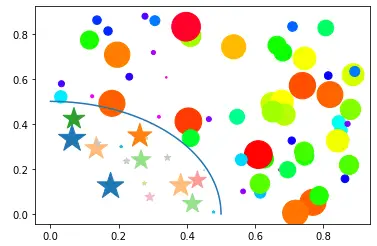

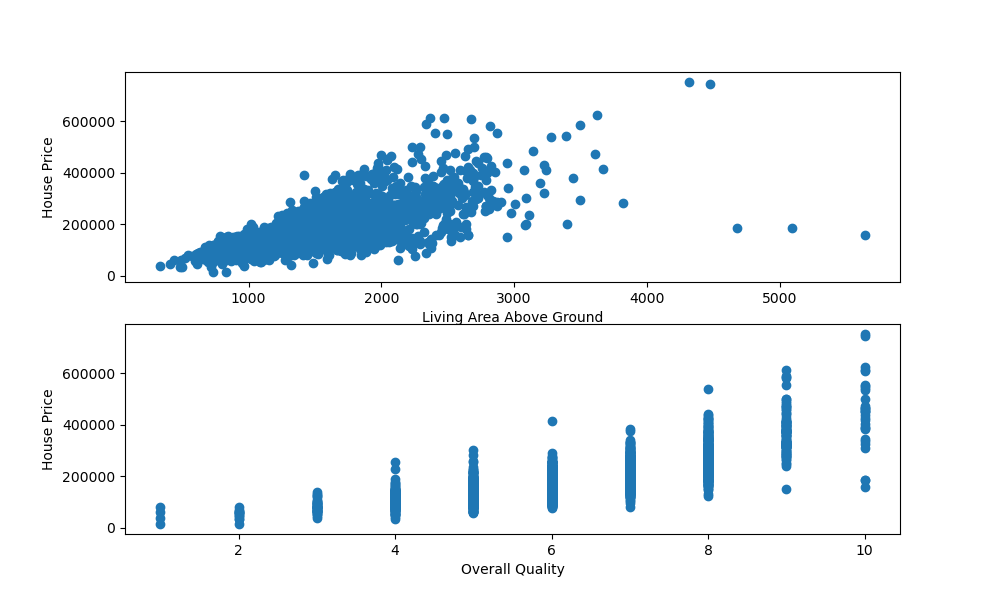

Of course, this ingredient list of classification neural network components will vary depending on the problem you're working on.īut it's more than enough to get started. SGD (stochastic gradient descent), Adam (see torch.optim for more options) Usually ReLU (rectified linear unit) but can be many othersīinary crossentropy ( torch.nn.BCELoss in PyTorch)Ĭross entropy ( torch.nn.CrossEntropyLoss in PyTorch) Problem specific, minimum = 1, maximum = unlimitedġ per class (e.g. 5 for age, sex, height, weight, smoking status in heart disease prediction) Architecture of a classification neural network ¶īefore we get into writing code, let's look at the general architecture of a classification neural network. Let's put everything we've done so far for binary classification together with a multi-class classification problem.Ġ. Putting it all together with multi-class classification We used non-linear functions to help model non-linear data, but what do these look like?Ĩ. So far our model has only had the ability to model straight lines, what about non-linear (non-straight) lines? We've trained an evaluated a model but it's not working, let's try a few things to improve it. Our model's found patterns in the data, let's compare its findings to the actual ( testing) data. Making predictions and evaluating a model (inference)

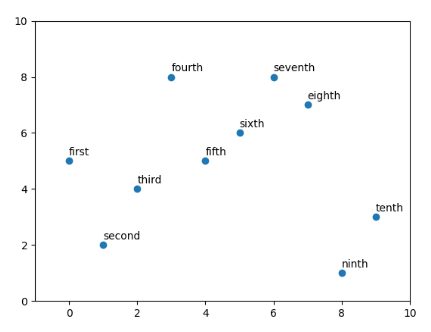

We've got data and a model, now let's let the model (try to) find patterns in the ( training) data.Ĥ. Here we'll create a model to learn patterns in the data, we'll also choose a loss function, optimizer and build a training loop specific to classification. Getting binary classification data readyĭata can be almost anything but to get started we're going to create a simple binary classification dataset.Ģ. Neural networks can come in almost any shape or size, but they typically follow a similar floor plan.ġ.

Specifically, we're going to cover: Topic PyTorch Workflow.Įxcept instead of trying to predict a straight line (predicting a number, also called a regression problem), we'll be working on a classification problem. In this notebook we're going to reiterate over the PyTorch workflow we coverd in 01. Putting things together by building a multi-class PyTorch modelĨ.1 Creating mutli-class classification dataĨ.2 Building a multi-class classification model in PyTorchĨ.3 Creating a loss function and optimizer for a multi-class PyTorch modelĨ.4 Getting prediction probabilities for a multi-class PyTorch modelĨ.5 Creating a training and testing loop for a multi-class PyTorch modelĨ.6 Making and evaluating predictions with a PyTorch multi-class modelĩ. Replicating non-linear activation functionsĨ.

Improving a model (from a model perspective)ĥ.1 Preparing data to see if our model can model a straight lineĥ.2 Adjusting model_1 to fit a straight lineĦ.1 Recreating non-linear data (red and blue circles)Ħ.4 Evaluating a model trained with non-linear activation functionsħ. Make predictions and evaluate the modelĥ. Make classification data and get it readyġ.2 Turn data into tensors and create train and test splitsģ.1 Going from raw model outputs to predicted labels (logits -> prediction probabilities -> prediction labels)Ĥ. Architecture of a classification neural networkġ.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed